对于需要在 Azure 上快速、一致地部署和管理 Kubernetes 服务的团队而言,通过控制台手动点击不仅效率低下,更难以保证环境的一致性。基础设施即代码(IaC)是解决这一问题的理想方案。本文将详细介绍如何使用 Terraform 这一流行的 IaC 工具,在 Azure 上自动化部署一个完整的 AKS (Azure Kubernetes Service) 集群,涵盖从环境准备、资源定义、部署验证到资源清理的全过程。

首先,需要在操作系统中安装 Terraform 命令行工具。以下是在 CentOS 9 上的安装步骤:

- 下载指定版本的 Terraform 二进制包 (本文以 1.7.0 为例):

[root@k8sworker starttech]# wget "https://releases.hashicorp.com/terraform/1.7.0/terraform_1.7.0_linux_amd64.zip"

- 解压下载的压缩包:

[root@k8sworker starttech]# unzip terraform_1.7.0_linux_amd64.zip

- 将可执行文件移动到系统路径下,以便全局调用:

[root@k8sworker starttech]# mv terraform /usr/local/bin/

-

在项目目录中初始化 Terraform,它会根据配置文件下载所需的 Azure 提供商插件:

[root@k8sworker starttech]# terraform init

Initializing the backend...

Initializing provider plugins...

- Finding latest version of hashicorp/azurerm...

- Installing hashicorp/azurerm v4.66.0...

- Installed hashicorp/azurerm v4.66.0 (signed by HashiCorp)

Terraform has created a lock file .terraform.lock.hcl to record the provider

selections it made above. Include this file in your version control repository

so that Terraform can guarantee to make the same selections by default when

you run “terraform init” in the future.

Terraform has been successfully initialized!

You may now begin working with Terraform. Try running “terraform plan” to see

any changes that are required for your infrastructure. All Terraform commands

should now work.

If you ever set or change modules or backend configuration for Terraform,

rerun this command to reinitialize your working directory. If you forget, other

commands will detect it and remind you to do so if necessary.

看到 “Terraform has been successfully initialized!” 提示,说明初始化成功。

一个典型的 Terraform 项目包含多个 .tf 文件,用于定义提供商、变量和资源。

2.1 提供商配置文件 (providers.tf)

此文件指定了所需的 Terraform 版本和云提供商(此处为 Azure)。

terraform {

required_version = “>= 1.0“

required_providers {

azurerm = {

source = “hashicorp/azurerm“

version = “~> 4.0“

}

}

}

provider “azurerm“ {

features {}

}

2.2 变量定义文件 (variables.tf)

使用变量可以使配置更灵活,便于复用和管理。

# ==========================================

# 通用变量

# ==========================================

variable “location“ {

description = “Azure region“

type = string

default = “westeurope“

}

variable “environment“ {

description = “Environment name“

type = string

default = “demo“

}

# ==========================================

# Resource Group 变量

# ==========================================

variable “resource_group_name“ {

description = “Resource group name“

type = string

default = “test-rg“

}

# ==========================================

# Virtual Network 变量

# ==========================================

variable “vnet_name“ {

description = “Virtual network name“

type = string

default = “vnet-aks-demo“

}

variable “vnet_address_space“ {

description = “VNet address space“

type = list(string)

default = [“10.0.0.0/16“]

}

# ==========================================

# Subnet 变量

# ==========================================

variable “node_subnet_name“ {

description = “AKS node subnet name“

type = string

default = “snet-aks-nodes“

}

variable “node_subnet_prefix“ {

description = “AKS node subnet address prefix“

type = string

default = “10.0.1.0/24“

}

# ==========================================

# AKS 变量

# ==========================================

variable “aks_name“ {

description = “AKS cluster name“

type = string

default = “aks-demo“

}

variable “aks_kubernetes_version“ {

description = “Kubernetes version“

type = string

default = “1.33.7“

}

variable “aks_node_pool_name“ {

description = “Default node pool name“

type = string

default = “agentpool“

}

variable “aks_node_count“ {

description = “Node count (manual scale)“

type = number

default = 2

}

variable “aks_node_vm_size“ {

description = “Node VM size“

type = string

default = “Standard_D2ps_v6“

}

variable “aks_os_sku“ {

description = “Node OS SKU“

type = string

default = “Ubuntu“

}

variable “aks_scale_method“ {

description = “Scale method: Manual or Autoscale“

type = string

default = “Manual“

}

variable “aks_network_plugin“ {

description = “Network plugin: azure or kubenet“

type = string

default = “azure“

}

variable “aks_network_policy“ {

description = “Network policy engine“

type = string

default = “azure“

}

variable “aks_load_balancer_sku“ {

description = “Load balancer SKU“

type = string

default = “standard“

}

variable “aks_service_cidr“ {

description = “AKS service CIDR“

type = string

default = “10.1.0.0/16“

}

variable “aks_dns_service_ip“ {

description = “AKS DNS service IP“

type = string

default = “10.1.0.10“

}

variable “aks_docker_bridge_cidr“ {

description = “Docker bridge CIDR“

type = string

default = “172.17.0.1/16“

}

variable “tags“ {

description = “Resource tags“

type = map(string)

default = {

managed_by = “terraform“

project = “aks-demo“

}

}

2.3 核心资源定义文件 (aks.tf)

这是最重要的文件,定义了所有要创建的 Azure 资源及其依赖关系。

# ==========================================

# 1. Resource Group

# ==========================================

resource “azurerm_resource_group“ “main“ {

name = var.resource_group_name

location = var.location

tags = merge(var.tags, {

environment = var.environment

})

}

# ==========================================

# 2. Virtual Network

# ==========================================

resource “azurerm_virtual_network“ “main“ {

name = var.vnet_name

address_space = var.vnet_address_space

location = azurerm_resource_group.main.location

resource_group_name = azurerm_resource_group.main.name

tags = merge(var.tags, {

environment = var.environment

})

depends_on = [azurerm_resource_group.main]

}

# ==========================================

# 3. Node Subnet (Azure CNI Node Subnet)

# ==========================================

resource “azurerm_subnet“ “nodes“ {

name = var.node_subnet_name

resource_group_name = azurerm_resource_group.main.name

virtual_network_name = azurerm_virtual_network.main.name

address_prefixes = [var.node_subnet_prefix]

depends_on = [azurerm_virtual_network.main]

}

# ==========================================

# 4. Network Security Group for Node Subnet

# ==========================================

resource “azurerm_network_security_group“ “nodes“ {

name = “nsg-${var.node_subnet_name}“

location = azurerm_resource_group.main.location

resource_group_name = azurerm_resource_group.main.name

security_rule {

name = “Allow_Azure_Load_Balancer“

priority = 100

direction = “Inbound“

access = “Allow“

protocol = “*“

source_port_range = “*“

destination_port_range = “*“

source_address_prefix = “AzureLoadBalancer“

destination_address_prefix = “*“

}

tags = var.tags

}

resource “azurerm_subnet_network_security_group_association“ “nodes“ {

subnet_id = azurerm_subnet.nodes.id

network_security_group_id = azurerm_network_security_group.nodes.id

}

# ==========================================

# 5. AKS Cluster

# ==========================================

resource “azurerm_kubernetes_cluster“ “main“ {

name = var.aks_name

location = azurerm_resource_group.main.location

resource_group_name = azurerm_resource_group.main.name

dns_prefix = “aksdemo“

kubernetes_version = var.aks_kubernetes_version

# 自动升级 - 使用 patch 表示禁用自动升级到新的次要版本

# 或者完全移除 automatic_channel_upgrade 参数

# 默认节点池 (agentpool, system mode, manual scale)

default_node_pool {

name = var.aks_node_pool_name

node_count = var.aks_node_count

vm_size = var.aks_node_vm_size

os_sku = var.aks_os_sku

# Azure CNI Node Subnet

vnet_subnet_id = azurerm_subnet.nodes.id

# Node标签

node_labels = {

environment = var.environment

}

# 系统节点池标签

tags = var.tags

}

# 网络配置 (Azure CNI + Azure Network Policy)

network_profile {

network_plugin = var.aks_network_plugin

network_policy = var.aks_network_policy

load_balancer_sku = var.aks_load_balancer_sku

# Service CIDR 配置

service_cidr = var.aks_service_cidr

dns_service_ip = var.aks_dns_service_ip

# docker_bridge_cidr 在较新版本中已弃用,移除

outbound_type = “loadBalancer“

}

# 托管身份 (SystemAssigned)

identity {

type = “SystemAssigned“

}

# Azure Policy 禁用 - 使用 azure_policy_enabled

azure_policy_enabled = false

# SKU Tier - Free (之前是 Basic,现在用 Free)

sku_tier = “Free“

tags = merge(var.tags, {

environment = var.environment

})

depends_on = [

azurerm_subnet.nodes,

azurerm_subnet_network_security_group_association.nodes

]

}

# ==========================================

# Outputs

# ==========================================

output “resource_group_name“ {

description = “Resource group name“

value = azurerm_resource_group.main.name

}

output “vnet_name“ {

description = “Virtual network name“

value = azurerm_virtual_network.main.name

}

output “node_subnet_id“ {

description = “AKS node subnet ID“

value = azurerm_subnet.nodes.id

}

output “aks_cluster_name“ {

description = “AKS cluster name“

value = azurerm_kubernetes_cluster.main.name

}

output “aks_cluster_id“ {

description = “AKS cluster ID“

value = azurerm_kubernetes_cluster.main.id

}

output “aks_kubernetes_version“ {

description = “AKS Kubernetes version“

value = azurerm_kubernetes_cluster.main.kubernetes_version

}

output “aks_node_pool_name“ {

description = “AKS node pool name“

value = azurerm_kubernetes_cluster.main.default_node_pool[0].name

}

output “aks_node_count“ {

description = “AKS node count“

value = azurerm_kubernetes_cluster.main.default_node_pool[0].node_count

}

output “aks_fqdn“ {

description = “AKS API server FQDN“

value = azurerm_kubernetes_cluster.main.fqdn

}

# 完整的 kubeconfig 文件内容(敏感)

output “kube_config_raw“ {

description = “完整的 kubeconfig 文件内容“

value = azurerm_kubernetes_cluster.main.kube_config_raw

sensitive = true

}

# kubeconfig 文件路径提示

output “kube_config_command“ {

description = “获取 kubeconfig 的命令“

value = “terraform output -raw kube_config_raw > ~/.kube/config-aks-demo && export KUBECONFIG=~/.kube/config-aks-demo“

}

# Azure CLI 获取凭证命令

output “az_get_credentials“ {

description = “使用 Azure CLI 获取凭证的命令“

value = “az aks get-credentials —resource-group ${azurerm_resource_group.main.name} —name ${azurerm_kubernetes_cluster.main.name} —overwrite-existing“

}

3. 校验文件语法

在部署之前,先检查 Terraform 配置文件的语法是否正确。

[root@k8sworker starttech]# ll

total 16

-rw-r—r—. 1 root root 5973 Apr 1 22:15 aks.tf

-rw-r—r—. 1 root root 193 Apr 1 21:34 providers.tf

-rw-r—r—. 1 root root 3152 Apr 1 20:51 variables.tf

[root@k8sworker starttech]# terraform validate

Success! The configuration is valid.

看到 “Success! The configuration is valid.” 表示配置语法无误。

4. 规划部署(Dry-Run)

执行 terraform plan 命令,Terraform 会计算出要创建、修改或销毁哪些资源,而不会实际执行。这是一个非常重要的安全步骤。

[root@k8sworker starttech]# terraform plan -out=tfplan

Terraform used the selected providers to generate the following execution plan. Resource actions are indicated with the following symbols:

+ create

Terraform will perform the following actions:

# azurerm_kubernetes_cluster.main will be created

+ resource “azurerm_kubernetes_cluster“ “main“ {

+ ai_toolchain_operator_enabled = false

+ azure_policy_enabled = false

+ current_kubernetes_version = (known after apply)

+ dns_prefix = “aksdemo“

+ fqdn = (known after apply)

+ http_application_routing_zone_name = (known after apply)

+ id = (known after apply)

+ kube_admin_config = (sensitive value)

+ kube_admin_config_raw = (sensitive value)

+ kube_config = (sensitive value)

+ kube_config_raw = (sensitive value)

+ kubernetes_version = “1.33.7“

+ location = “westeurope“

+ name = “aks-demo“

+ node_os_upgrade_channel = “NodeImage“

+ node_resource_group = (known after apply)

+ node_resource_group_id = (known after apply)

+ oidc_issuer_url = (known after apply)

+ portal_fqdn = (known after apply)

+ private_cluster_enabled = false

+ private_cluster_public_fqdn_enabled = false

+ private_dns_zone_id = (known after apply)

+ private_fqdn = (known after apply)

+ resource_group_name = “test-rg“

+ role_based_access_control_enabled = true

+ run_command_enabled = true

+ sku_tier = “Free“

+ support_plan = “KubernetesOfficial“

+ tags = {

+ “environment“ = “demo“

+ “managed_by“ = “terraform“

+ “project“ = “aks-demo“

}

+ workload_identity_enabled = false

+ default_node_pool {

+ kubelet_disk_type = (known after apply)

+ max_pods = (known after apply)

+ name = “agentpool“

+ node_count = 2

+ node_labels = {

+ “environment“ = “demo“

}

+ orchestrator_version = (known after apply)

+ os_disk_size_gb = (known after apply)

+ os_disk_type = “Managed“

+ os_sku = “Ubuntu“

+ scale_down_mode = “Delete“

+ tags = {

+ “managed_by“ = “terraform“

+ “project“ = “aks-demo“

}

+ type = “VirtualMachineScaleSets“

+ ultra_ssd_enabled = false

+ vm_size = “Standard_D2ps_v6“

+ vnet_subnet_id = (known after apply)

+ workload_runtime = (known after apply)

}

+ identity {

+ principal_id = (known after apply)

+ tenant_id = (known after apply)

+ type = “SystemAssigned“

}

+ network_profile {

+ dns_service_ip = “10.1.0.10“

+ ip_versions = (known after apply)

+ load_balancer_sku = “standard“

+ network_data_plane = “azure“

+ network_mode = (known after apply)

+ network_plugin = “azure“

+ network_policy = “azure“

+ outbound_type = “loadBalancer“

+ pod_cidr = (known after apply)

+ pod_cidrs = (known after apply)

+ service_cidr = “10.1.0.0/16“

+ service_cidrs = (known after apply)

}

}

# azurerm_network_security_group.nodes will be created

+ resource “azurerm_network_security_group“ “nodes“ {

+ id = (known after apply)

+ location = “westeurope“

+ name = “nsg-snet-aks-nodes“

+ resource_group_name = “test-rg“

+ security_rule = [

+ {

+ access = “Allow“

+ description = ““

+ destination_address_prefix = “*“

+ destination_address_prefixes = []

+ destination_application_security_group_ids = []

+ destination_port_range = “*“

+ destination_port_ranges = []

+ direction = “Inbound“

+ name = “Allow_Azure_Load_Balancer“

+ priority = 100

+ protocol = “*“

+ source_address_prefix = “AzureLoadBalancer“

+ source_address_prefixes = []

+ source_application_security_group_ids = []

+ source_port_range = “*“

+ source_port_ranges = []

},

]

+ tags = {

+ “managed_by“ = “terraform“

+ “project“ = “aks-demo“

}

}

# azurerm_resource_group.main will be created

+ resource “azurerm_resource_group“ “main“ {

+ id = (known after apply)

+ location = “westeurope“

+ name = “test-rg“

+ tags = {

+ “environment“ = “demo“

+ “managed_by“ = “terraform“

+ “project“ = “aks-demo“

}

}

# azurerm_subnet.nodes will be created

+ resource “azurerm_subnet“ “nodes“ {

+ address_prefixes = [

+ “10.0.1.0/24“,

]

+ default_outbound_access_enabled = true

+ id = (known after apply)

+ name = “snet-aks-nodes“

+ private_endpoint_network_policies = “Disabled“

+ private_link_service_network_policies_enabled = true

+ resource_group_name = “test-rg“

+ virtual_network_name = “vnet-aks-demo“

}

# azurerm_subnet_network_security_group_association.nodes will be created

+ resource “azurerm_subnet_network_security_group_association“ “nodes“ {

+ id = (known after apply)

+ network_security_group_id = (known after apply)

+ subnet_id = (known after apply)

}

# azurerm_virtual_network.main will be created

+ resource “azurerm_virtual_network“ “main“ {

+ address_space = [

+ “10.0.0.0/16“,

]

+ dns_servers = (known after apply)

+ guid = (known after apply)

+ id = (known after apply)

+ location = “westeurope“

+ name = “vnet-aks-demo“

+ private_endpoint_vnet_policies = “Disabled“

+ resource_group_name = “test-rg“

+ subnet = (known after apply)

+ tags = {

+ “environment“ = “demo“

+ “managed_by“ = “terraform“

+ “project“ = “aks-demo“

}

}

Plan: 6 to add, 0 to change, 0 to destroy.

Changes to Outputs:

+ aks_cluster_id = (known after apply)

+ aks_cluster_name = “aks-demo“

+ aks_fqdn = (known after apply)

+ aks_kubernetes_version = “1.33.7“

+ aks_node_count = 2

+ aks_node_pool_name = “agentpool“

+ az_get_credentials = “az aks get-credentials —resource-group test-rg —name aks-demo —overwrite-existing“

+ kube_config_command = “terraform output -raw kube_config_raw > ~/.kube/config-aks-demo && export KUBECONFIG=~/.kube/config-aks-demo“

+ kube_config_raw = (sensitive value)

+ node_subnet_id = (known after apply)

+ resource_group_name = “test-rg“

+ vnet_name = “vnet-aks-demo“

───────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────

Saved the plan to: tfplan

To perform exactly these actions, run the following command to apply:

terraform apply “tfplan“

输出显示将有 6 个新资源被创建,与我们定义的资源数量一致。-out=tfplan 参数将执行计划保存到文件,确保后续 apply 操作与此次计划完全一致。

5. 执行部署

确认计划无误后,使用保存的计划文件进行实际部署。

[root@k8sworker starttech]# terraform apply tfplan

azurerm_resource_group.main: Creating...

azurerm_resource_group.main: Still creating... [10s elapsed]

azurerm_resource_group.main: Still creating... [20s elapsed]

azurerm_resource_group.main: Still creating... [30s elapsed]

azurerm_resource_group.main: Creation complete after 31s [id=/subscriptions/c19511ad-c1ed-4f95-9d17-f0e83dc00af4/resourceGroups/test-rg]

azurerm_virtual_network.main: Creating...

azurerm_network_security_group.nodes: Creating...

azurerm_network_security_group.nodes: Creation complete after 8s [id=/subscriptions/c19511ad-c1ed-4f95-9d17-f0e83dc00af4/resourceGroups/test-rg/providers/Microsoft.Network/networkSecurityGroups/nsg-snet-aks-nodes]

azurerm_virtual_network.main: Still creating... [10s elapsed]

azurerm_virtual_network.main: Creation complete after 11s [id=/subscriptions/c19511ad-c1ed-4f95-9d17-f0e83dc00af4/resourceGroups/test-rg/providers/Microsoft.Network/virtualNetworks/vnet-aks-demo]

azurerm_subnet.nodes: Creating...

azurerm_subnet.nodes: Still creating... [10s elapsed]

azurerm_subnet.nodes: Creation complete after 12s [id=/subscriptions/c19511ad-c1ed-4f95-9d17-f0e83dc00af4/resourceGroups/test-rg/providers/Microsoft.Network/virtualNetworks/vnet-aks-demo/subnets/snet-aks-nodes]

azurerm_subnet_network_security_group_association.nodes: Creating...

azurerm_subnet_network_security_group_association.nodes: Still creating... [10s elapsed]

azurerm_subnet_network_security_group_association.nodes: Creation complete after 12s [id=/subscriptions/c19511ad-c1ed-4f95-9d17-f0e83dc00af4/resourceGroups/test-rg/providers/Microsoft.Network/virtualNetworks/vnet-aks-demo/subnets/snet-aks-nodes]

azurerm_kubernetes_cluster.main: Creating...

azurerm_kubernetes_cluster.main: Still creating... [10s elapsed]

azurerm_kubernetes_cluster.main: Still creating... [20s elapsed]

azurerm_kubernetes_cluster.main: Still creating... [30s elapsed]

azurerm_kubernetes_cluster.main: Still creating... [40s elapsed]

azurerm_kubernetes_cluster.main: Still creating... [50s elapsed]

azurerm_kubernetes_cluster.main: Still creating... [1m0s elapsed]

azurerm_kubernetes_cluster.main: Still creating... [1m10s elapsed]

azurerm_kubernetes_cluster.main: Still creating... [1m20s elapsed]

azurerm_kubernetes_cluster.main: Still creating... [1m30s elapsed]

azurerm_kubernetes_cluster.main: Still creating... [1m40s elapsed]

azurerm_kubernetes_cluster.main: Still creating... [1m50s elapsed]

azurerm_kubernetes_cluster.main: Still creating... [2m0s elapsed]

azurerm_kubernetes_cluster.main: Still creating... [2m10s elapsed]

azurerm_kubernetes_cluster.main: Still creating... [2m20s elapsed]

azurerm_kubernetes_cluster.main: Still creating... [2m30s elapsed]

azurerm_kubernetes_cluster.main: Still creating... [2m40s elapsed]

azurerm_kubernetes_cluster.main: Still creating... [2m50s elapsed]

azurerm_kubernetes_cluster.main: Still creating... [3m0s elapsed]

azurerm_kubernetes_cluster.main: Still creating... [3m10s elapsed]

azurerm_kubernetes_cluster.main: Still creating... [3m20s elapsed]

azurerm_kubernetes_cluster.main: Still creating... [3m30s elapsed]

azurerm_kubernetes_cluster.main: Still creating... [3m40s elapsed]

azurerm_kubernetes_cluster.main: Still creating... [3m50s elapsed]

azurerm_kubernetes_cluster.main: Still creating... [4m0s elapsed]

azurerm_kubernetes_cluster.main: Still creating... [4m10s elapsed]

azurerm_kubernetes_cluster.main: Still creating... [4m20s elapsed]

azurerm_kubernetes_cluster.main: Still creating... [4m30s elapsed]

azurerm_kubernetes_cluster.main: Still creating... [4m40s elapsed]

azurerm_kubernetes_cluster.main: Creation complete after 4m56s [id=/subscriptions/c19511ad-c1ed-4f95-9d17-f0e83dc00af4/resourceGroups/test-rg/providers/Microsoft.ContainerService/managedClusters/aks-demo]

Apply complete! Resources: 6 added, 0 changed, 0 destroyed.

Outputs:

aks_cluster_id = “/subscriptions/c19511ad-c1ed-4f95-9d17-f0e83dc00af4/resourceGroups/test-rg/providers/Microsoft.ContainerService/managedClusters/aks-demo“

aks_cluster_name = “aks-demo“

aks_fqdn = “aksdemo-qe7xqpzn.hcp.westeurope.azmk8s.io“

aks_kubernetes_version = “1.33.7“

aks_node_count = 2

aks_node_pool_name = “agentpool“

az_get_credentials = “az aks get-credentials —resource-group test-rg —name aks-demo —overwrite-existing“

kube_config_command = “terraform output -raw kube_config_raw > ~/.kube/config-aks-demo && export KUBECONFIG=~/.kube/config-aks-demo“

kube_config_raw = <sensitive>

node_subnet_id = “/subscriptions/c19511ad-c1ed-4f95-9d17-f0e83dc00af4/resourceGroups/test-rg/providers/Microsoft.Network/virtualNetworks/vnet-aks-demo/subnets/snet-aks-nodes“

resource_group_name = “test-rg“

vnet_name = “vnet-aks-demo“

最终输出 “Apply complete! Resources: 6 added”,表明所有资源已成功创建。

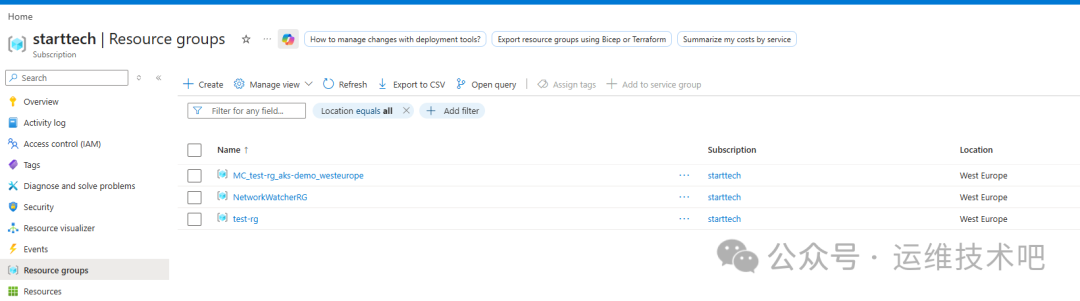

6. 在 Azure 门户中验证

部署完成后,可以登录 Azure 门户,在资源组中查看创建的所有资源。

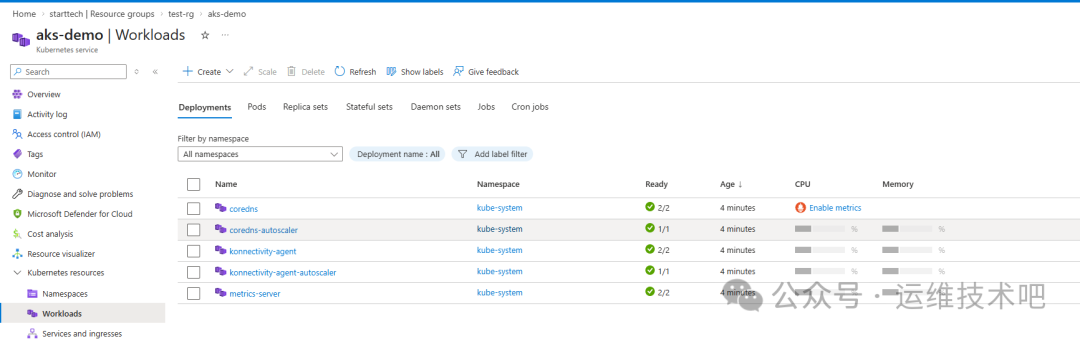

进入创建的 AKS 集群,可以在 “Workloads” 页面看到系统自动部署的核心组件(如 CoreDNS, metrics-server 等)均已正常运行。

7. 使用 kubectl 查看 AKS 集群资源

现在,我们可以使用 Terraform 输出的 kubeconfig 连接到集群。按照输出提示的命令操作:

[root@k8sworker starttech]# terraform output -raw kube_config_raw > ~/.kube/config

[root@k8sworker starttech]# ls -la ~/.kube/

total 16

drwxr-xr-x. 3 root root 33 Mar 28 14:09 .

dr-xr-x—-. 18 root root 4096 Apr 1 22:15 ..

drwxr-x—-. 4 root root 35 Mar 28 14:09 cache

-rw——-—-. 1 root root 9662 Apr 5 13:39 config

[root@k8sworker starttech]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

aks-agentpool-37018275-vmss000000 Ready <none> 4m52s v1.33.7

aks-agentpool-37018275-vmss000001 Ready <none> 4m51s v1.33.7

两个节点状态均为 Ready,说明 Kubernetes 集群已成功就绪,可以开始部署应用。

8. 销毁资源(清理环境)

完成测试后,为了不产生额外费用,应销毁所有创建的资源。Terraform 使这一过程变得非常简单。

8.1 查看当前状态管理的资源

[root@k8sworker starttech]# terraform state list

azurerm_kubernetes_cluster.main

azurerm_network_security_group.nodes

azurerm_resource_group.main

azurerm_subnet.nodes

azurerm_subnet_network_security_group_association.nodes

azurerm_virtual_network.main

8.2 执行销毁

可以直接运行 terraform destroy,它会自动计算依赖关系并按正确顺序销毁所有资源。在确认提示中输入 yes。

(由于 terraform destroy 的输出非常长,此处省略中间详细的销毁过程日志)

...

Do you really want to destroy all resources?

Terraform will destroy all your managed infrastructure, as shown above.

There is no undo. Only ‘yes’ will be accepted to confirm.

Enter a value: yes

...

Destroy complete! Resources: 6 destroyed.

最终提示 “Resources: 6 destroyed.”,表示由 Terraform 管理的 6 个核心资源已被成功删除。

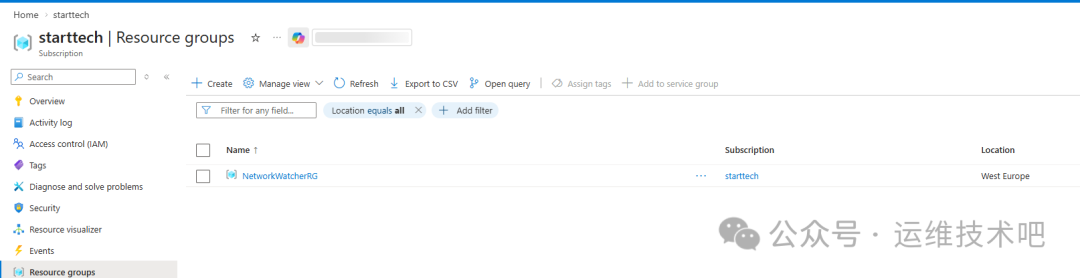

注意:在 Azure 门户中检查,可能会发现一个名为 NetworkWatcherRG 的资源组仍然存在。

这是因为 NetworkWatcherRG 是 Azure 平台为了提供网络监控功能而自动创建的资源组,它不属于我们的 Terraform 配置管理范围,因此 terraform destroy 不会删除它。这是正常现象。

如果需要删除这个资源组,可以使用 Azure CLI 命令:

az group delete —name NetworkWatcherRG —yes —no-wait

总结

通过本文的实践,我们完成了一个完整的基于 Terraform 的 Azure AKS 集群生命周期管理,包括编写声明式配置、规划变更、自动化部署、验证以及一键式清理。这充分展示了基础设施即代码在提升效率、保证环境一致性和实现版本控制方面的巨大价值。无论是用于开发测试环境的快速搭建,还是为生产环境制定可靠的部署流程,这套方法都极具参考意义。

希望这篇在 云栈社区 分享的实战指南能帮助你更好地利用 Terraform 驾驭 Azure 云原生资源。如果你在实践过程中遇到问题,或有更优的配置方案,欢迎交流探讨。