最近不少用户在讨论使用MemOS这类付费插件来降低OpenClaw的Token消耗。但深入研究其源码后我发现,它的核心逻辑其实并不复杂,完全可以用一套纯本地的方案来实现,甚至效果更好。

本文将手把手教你搭建一个零费用、零云端依赖的记忆增强系统,全程在Cursor中操作,无需编码基础也能轻松搞定。

这个方案能帮你解决什么?

OpenClaw原生的记忆系统存在几个明显的痛点:

- 全局记忆膨胀:

MEMORY.md 文件会越来越大,每次对话都要加载全文,导致无效的Token消耗。

- 记忆依赖模型自觉:Agent经常“忘记”主动记录关键信息。

- 记忆召回不精准:要么把所有记忆都塞进上下文,要么完全找不到相关记忆。

我们的本地增强方案致力于解决这些问题:

- 自动记录:每次对话结束后,自动将内容存入本地数据库,不依赖模型主动记忆。

- 按需召回:对话开始前,自动搜索相关的近期对话、摘要和用户偏好,只注入必要的部分。

- 偏好学习:通过夜间任务自动分析你的历史对话,提取并学习你的使用习惯和偏好。

- 完全本地化:基于 SQLite 数据库和本地的 Ollama 模型,零云端依赖,零费用。

工作原理

整个系统的工作流清晰分为两条路径:

1. 实时路径 (每次对话触发)

用户发送消息

→ 触发 `before_agent_start` 钩子

→ 通过 QMD 搜索长期记忆(workspace/memory/ 下的文件)

→ 从 SQLite 搜索近期相关对话与摘要

→ 从 SQLite 读取用户偏好信息

→ 将所有信息拼装成 `prependContext`,注入到提示词前部

→ [AI Agent](https://yunpan.plus/f/29-1) 基于已有记忆上下文进行回复(无需再读取庞大的 memory 文件)

→ 触发 `agent_end` 钩子

→ 将本轮对话存储到 SQLite(毫秒级 INSERT 操作)

2. 夜间路径 (每日自动执行)

定时任务(Cron Job)触发

→ 读取当天所有未处理的对话记录

→ 发送给本地运行的 Ollama 模型进行分析

→ 提取用户偏好 → 写入 preferences 表

→ 生成对话摘要 → 写入 summaries 表

→ 标记这些对话为已处理

前置条件

开始之前,请确保你的环境已满足以下要求:

- 已安装 OpenClaw(版本 2026.2.13 或更高)。

- 已安装并配置好 QMD 插件。

- 已安装 Ollama(用于夜间偏好提取),安装命令:

brew install ollama。

- 已在 Ollama 中拉取一个小模型,例如:

ollama pull qwen3:8b。

- 准备好 Cursor IDE(用于跟随教程操作)。

第一步:创建插件目录

打开 Cursor 的终端,运行以下命令创建必要的目录结构:

mkdir -p ~/.openclaw/extensions/local-memory-recall/lib

mkdir -p ~/.openclaw/extensions/local-memory-recall/scripts

mkdir -p ~/.openclaw/extensions/local-memory-recall/data

第二步:创建插件描述文件

在 Cursor 中新建文件,路径为:~/.openclaw/extensions/local-memory-recall/openclaw.plugin.json

将以下 JSON 配置内容粘贴进去:

{

"id": "local-memory-recall",

"name": "Local Memory Recall",

"description": "Auto-capture conversations and recall relevant memories via local SQLite + QMD. Zero cloud dependencies, zero cost.",

"version": "1.0.0",

"kind": "lifecycle",

"main": "./index.js",

"configSchema": {

"type": "object",

"properties": {

"agentIds": {

"type": "array",

"items": { "type": "string" },

"description": "Only activate for these agent IDs. Empty = all agents."

},

"recallEnabled": { "type": "boolean", "default": true },

"addEnabled": { "type": "boolean", "default": true },

"memoryLimit": { "type": "integer", "default": 6 },

"conversationLimit": { "type": "integer", "default": 5 },

"preferenceLimit": { "type": "integer", "default": 6 },

"maxDaysBack": { "type": "integer", "default": 30 },

"captureStrategy": {

"type": "string",

"enum": ["last_turn", "full_session"],

"default": "last_turn"

},

"maxMessageChars": { "type": "integer", "default": 20000 },

"dbPath": { "type": "string" }

},

"additionalProperties": false

}

}

第三步:创建 SQLite 存储层

新建文件,路径为:~/.openclaw/extensions/local-memory-recall/lib/local-store.js

这是核心的数据库操作层,负责所有数据的存储和检索,请完整粘贴以下代码:

import { DatabaseSync } from "node:sqlite";

import { mkdirSync, existsSync } from "node:fs";

import { dirname, join } from "node:path";

const DEFAULT_DB_DIR = join(dirname(new URL(import.meta.url).pathname), "..", "data");

export class LocalMemoryStore {

#db;

constructor(dbPath) {

const resolvedPath = dbPath || join(DEFAULT_DB_DIR, "memories.db");

const dir = dirname(resolvedPath);

if (!existsSync(dir)) mkdirSync(dir, { recursive: true });

this.#db = new DatabaseSync(resolvedPath);

this.#db.exec("PRAGMA journal_mode=WAL");

this.#db.exec("PRAGMA busy_timeout=3000");

this.#migrate();

}

#migrate() {

this.#db.exec(`

CREATE TABLE IF NOT EXISTS conversations (

id INTEGER PRIMARY KEY AUTOINCREMENT,

session_id TEXT,

agent_id TEXT NOT NULL,

role TEXT NOT NULL,

content TEXT NOT NULL,

created_at TEXT NOT NULL DEFAULT (datetime('now')),

processed INTEGER NOT NULL DEFAULT 0

);

CREATE INDEX IF NOT EXISTS idx_conv_agent_created

ON conversations(agent_id, created_at DESC);

CREATE INDEX IF NOT EXISTS idx_conv_processed

ON conversations(processed, created_at);

CREATE TABLE IF NOT EXISTS preferences (

id INTEGER PRIMARY KEY AUTOINCREMENT,

agent_id TEXT NOT NULL,

preference TEXT NOT NULL,

type TEXT NOT NULL DEFAULT 'explicit',

source_conversation_id INTEGER,

created_at TEXT NOT NULL DEFAULT (datetime('now')),

updated_at TEXT NOT NULL DEFAULT (datetime('now')),

UNIQUE(agent_id, preference)

);

CREATE INDEX IF NOT EXISTS idx_pref_agent ON preferences(agent_id);

CREATE TABLE IF NOT EXISTS summaries (

id INTEGER PRIMARY KEY AUTOINCREMENT,

conversation_group_id TEXT NOT NULL,

agent_id TEXT NOT NULL,

summary TEXT NOT NULL,

created_at TEXT NOT NULL DEFAULT (datetime('now'))

);

CREATE INDEX IF NOT EXISTS idx_sum_agent_created

ON summaries(agent_id, created_at DESC);

`);

}

addConversation({ sessionId, agentId, role, content }) {

this.#db.prepare(

`INSERT INTO conversations (session_id, agent_id, role, content) VALUES (?, ?, ?, ?)`

).run(sessionId || "", agentId, role, content);

}

upsertPreference({ agentId, preference, type, sourceConversationId }) {

this.#db.prepare(

`INSERT INTO preferences (agent_id, preference, type, source_conversation_id)

VALUES (?, ?, ?, ?)

ON CONFLICT(agent_id, preference) DO UPDATE SET

type = excluded.type, updated_at = datetime('now')`

).run(agentId, preference, type || "explicit", sourceConversationId || null);

}

addSummary({ conversationGroupId, agentId, summary }) {

this.#db.prepare(

`INSERT INTO summaries (conversation_group_id, agent_id, summary) VALUES (?, ?, ?)`

).run(conversationGroupId, agentId, summary);

}

markConversationsProcessed(ids) {

if (!ids?.length) return;

const placeholders = ids.map(() => "?").join(",");

this.#db.prepare(

`UPDATE conversations SET processed = 1 WHERE id IN (${placeholders})`

).run(...ids);

}

searchConversations({ agentId, query, limit = 5, maxDaysBack = 30 }) {

const summaries = this.#searchSummaries({ agentId, query, limit, maxDaysBack });

if (summaries.length >= limit) return summaries;

const remaining = limit - summaries.length;

const conversations = this.#searchRawConversations({ agentId, query, limit: remaining, maxDaysBack });

return [...summaries, ...conversations];

}

#searchSummaries({ agentId, query, limit, maxDaysBack }) {

const keywords = this.#extractKeywords(query);

if (!keywords.length) {

return this.#db.prepare(

`SELECT summary AS content, 'summary' AS source, created_at FROM summaries

WHERE agent_id = ? AND created_at > datetime('now', ?) ORDER BY created_at DESC LIMIT ?`

).all(agentId, `-${maxDaysBack} days`, limit);

}

const likeClause = keywords.map(() => "summary LIKE ?").join(" OR ");

const likeParams = keywords.map((k) => `%${k}%`);

return this.#db.prepare(

`SELECT summary AS content, 'summary' AS source, created_at FROM summaries

WHERE agent_id = ? AND created_at > datetime('now', ?) AND (${likeClause})

ORDER BY created_at DESC LIMIT ?`

).all(agentId, `-${maxDaysBack} days`, ...likeParams, limit);

}

#searchRawConversations({ agentId, query, limit, maxDaysBack }) {

const keywords = this.#extractKeywords(query);

if (!keywords.length) {

return this.#db.prepare(

`SELECT role, content, 'conversation' AS source, created_at FROM conversations

WHERE agent_id = ? AND created_at > datetime('now', ?) ORDER BY created_at DESC LIMIT ?`

).all(agentId, `-${maxDaysBack} days`, limit);

}

const likeClause = keywords.map(() => "content LIKE ?").join(" OR ");

const likeParams = keywords.map((k) => `%${k}%`);

return this.#db.prepare(

`SELECT role, content, 'conversation' AS source, created_at FROM conversations

WHERE agent_id = ? AND created_at > datetime('now', ?) AND (${likeClause})

ORDER BY created_at DESC LIMIT ?`

).all(agentId, `-${maxDaysBack} days`, ...likeParams, limit);

}

getPreferences({ agentId, limit = 6 }) {

return this.#db.prepare(

`SELECT preference, type, updated_at FROM preferences

WHERE agent_id = ? ORDER BY updated_at DESC LIMIT ?`

).all(agentId, limit);

}

getUnprocessedConversations({ limit = 200 } = {}) {

return this.#db.prepare(

`SELECT id, session_id, agent_id, role, content, created_at FROM conversations

WHERE processed = 0 ORDER BY created_at ASC LIMIT ?`

).all(limit);

}

cleanup() {

this.#db.exec(`DELETE FROM conversations WHERE created_at < datetime('now', '-30 days')`);

this.#db.exec(`DELETE FROM summaries WHERE created_at < datetime('now', '-90 days')`);

}

close() { this.#db.close(); }

#extractKeywords(query) {

if (!query) return [];

return query.replace(/[^\p{L}\p{N}\s]/gu, " ").split(/\s+/).filter((w) => w.length >= 2).slice(0, 8);

}

}

第四步:创建主插件文件

新建文件,路径为:~/.openclaw/extensions/local-memory-recall/index.js

这是插件的主逻辑文件,负责钩子事件的监听与处理,请完整粘贴以下代码:

import { LocalMemoryStore } from "./lib/local-store.js";

const PLUGIN_TAG = "[local-memory-recall]";

function truncate(text, maxLen) {

if (!text || !maxLen) return text || "";

return text.length > maxLen ? `${text.slice(0, maxLen)}...` : text;

}

function extractText(content) {

if (!content) return "";

if (typeof content === "string") return content;

if (Array.isArray(content)) {

return content.filter((b) => b?.type === "text").map((b) => b.text).join(" ");

}

return "";

}

function pad2(v) { return String(v).padStart(2, "0"); }

function formatTime(ts) {

const d = ts ? new Date(ts) : new Date();

return `${d.getFullYear()}-${pad2(d.getMonth() + 1)}-${pad2(d.getDate())} ${pad2(d.getHours())}:${pad2(d.getMinutes())}`;

}

function pickLastTurnMessages(messages, maxChars) {

const lastUserIdx = messages.map((m, i) => ({ m, i })).filter(({ m }) => m?.role === "user").map(({ i }) => i).pop();

if (lastUserIdx === undefined) return [];

const results = [];

for (const msg of messages.slice(lastUserIdx)) {

if (!msg?.role) continue;

const content = extractText(msg.content);

if (!content) continue;

if (msg.role === "user" || msg.role === "assistant") {

results.push({ role: msg.role, content: truncate(content, maxChars) });

}

}

return results;

}

function buildPrependContext({ qmdResults, conversations, preferences, nowText }) {

const lines = [];

lines.push("# Role", "",

"You are an intelligent assistant with long-term memory. " +

"Use the retrieved memory fragments below to provide personalized, accurate responses.", "");

lines.push("# System Context", "", `* Current Time: ${nowText}`, "");

lines.push("# Memory Data", "");

if (qmdResults?.length) {

lines.push("## Long-term Memory (from knowledge base)", "```text", "<facts>");

for (const item of qmdResults) {

const snippet = item.snippet || item.content || "";

if (snippet) lines.push(` - ${snippet.replace(/\n/g, " ").trim()}`);

}

lines.push("</facts>", "```", "");

}

if (conversations?.length) {

lines.push("## Recent Conversations", "```text", "<recent_context>");

for (const item of conversations) {

const time = item.created_at || "";

if (item.source === "summary") {

lines.push(` - [${time}] (summary) ${item.content}`);

} else {

lines.push(` - [${time}] [${item.role}] ${truncate(item.content, 500)}`);

}

}

lines.push("</recent_context>", "```", "");

}

if (preferences?.length) {

lines.push("## User Preferences", "```text", "<preferences>");

for (const pref of preferences) {

const typeLabel = pref.type === "implicit" ? "[Implicit]" : "[Explicit]";

lines.push(` - ${typeLabel} ${pref.preference}`);

}

lines.push("</preferences>", "```", "");

}

lines.push("# Memory Safety Protocol", "",

"Before using any memory above, apply these checks:",

"1. **Source**: Is this a direct user statement or AI inference?",

"2. **Attribution**: Is the subject definitely the user?",

"3. **Relevance**: Does this directly help answer the current query?",

"4. **Freshness**: If memory conflicts with current intent, prioritize the current query.", "");

lines.push("# Attention", "",

"Relevant memory context is already provided above. " +

"Do NOT read from or write to local `MEMORY.md` or `memory/*` files for reference — " +

"they may be outdated or redundant with the injected context. " +

"Focus on the user's current query.", "");

return lines.join("\n");

}

export default {

id: "local-memory-recall",

name: "Local Memory Recall",

description: "Auto-capture conversations and recall relevant memories via local SQLite + QMD.",

kind: "lifecycle",

register(api) {

const cfg = api.pluginConfig || {};

const log = api.logger ?? console;

const agentIds = cfg.agentIds || [];

const recallEnabled = cfg.recallEnabled !== false;

const addEnabled = cfg.addEnabled !== false;

const memoryLimit = cfg.memoryLimit ?? 6;

const conversationLimit = cfg.conversationLimit ?? 5;

const preferenceLimit = cfg.preferenceLimit ?? 6;

const maxDaysBack = cfg.maxDaysBack ?? 30;

const captureStrategy = cfg.captureStrategy ?? "last_turn";

const maxMessageChars = cfg.maxMessageChars ?? 20000;

let store;

try {

store = new LocalMemoryStore(cfg.dbPath);

log.info?.(`${PLUGIN_TAG} SQLite store initialized`);

} catch (err) {

log.error?.(`${PLUGIN_TAG} Failed to init SQLite: ${err}`);

return;

}

const isAgentAllowed = (ctx) => {

if (!agentIds.length) return true;

return agentIds.includes(ctx?.agentId);

};

api.on("before_agent_start", async (event, ctx) => {

if (!recallEnabled || !isAgentAllowed(ctx)) return;

if (!event?.prompt || event.prompt.length < 3) return;

try {

const agentId = ctx?.agentId || "unknown";

const nowText = formatTime();

let qmdResults = [];

try {

if (api.runtime?.tools?.memory_search) {

const r = await api.runtime.tools.memory_search({ query: event.prompt, limit: memoryLimit });

if (r?.results) qmdResults = r.results;

}

} catch (e) { log.warn?.(`${PLUGIN_TAG} QMD search failed: ${e}`); }

const conversations = store.searchConversations({ agentId, query: event.prompt, limit: conversationLimit, maxDaysBack });

const preferences = store.getPreferences({ agentId, limit: preferenceLimit });

if (!qmdResults.length && !conversations.length && !preferences.length) return;

return { prependContext: buildPrependContext({ qmdResults, conversations, preferences, nowText }) };

} catch (err) { log.warn?.(`${PLUGIN_TAG} recall failed: ${err}`); }

});

api.on("agent_end", async (event, ctx) => {

if (!addEnabled || !isAgentAllowed(ctx)) return;

if (!event?.success || !event?.messages?.length) return;

try {

const agentId = ctx?.agentId || "unknown";

const sessionId = ctx?.sessionKey || ctx?.sessionId || "";

const messages = pickLastTurnMessages(event.messages, maxMessageChars);

for (const msg of messages) {

store.addConversation({ sessionId, agentId, role: msg.role, content: msg.content });

}

} catch (err) { log.warn?.(`${PLUGIN_TAG} capture failed: ${err}`); }

});

try { store.cleanup(); log.info?.(`${PLUGIN_TAG} Cleanup completed`); }

catch (err) { log.warn?.(`${PLUGIN_TAG} Cleanup failed: ${err}`); }

},

};

第五步:创建夜间处理脚本

新建文件,路径为:~/.openclaw/extensions/local-memory-recall/scripts/nightly-process.sh

这个脚本负责在夜间调用 Ollama 处理未完成的对话,提取偏好和摘要,请完整粘贴以下内容:

#!/bin/bash

# 每晚自动提取偏好 + 生成对话摘要

set -euo pipefail

SCRIPT_DIR="$(cd "$(dirname "$0")" && pwd)"

DB_PATH="${SCRIPT_DIR}/../data/memories.db"

OLLAMA_URL="${OLLAMA_URL:-http://localhost:11434/api/generate}"

OLLAMA_MODEL="${OLLAMA_MODEL:-qwen3:8b}"

LOG_PREFIX="[nightly-process]"

log() { echo "${LOG_PREFIX}$(date '+%H:%M:%S') $*"; }

for cmd in sqlite3 curl jq; do

command -v "$cmd" &>/dev/null || { log "ERROR: $cmd not found"; exit 1; }

done

[ -f "$DB_PATH" ] || { log "ERROR: DB not found at $DB_PATH"; exit 1; }

curl -s --max-time 5 http://localhost:11434/api/tags >/dev/null 2>&1 || { log "ERROR: Ollama not running"; exit 1; }

log "Starting..."

# 1. 先只读 ID 列表(安全,不解析内容)

IDS=$(sqlite3 "$DB_PATH" "

SELECT GROUP_CONCAT(id) FROM (

SELECT id FROM conversations

WHERE processed = 0

ORDER BY created_at ASC

LIMIT 200

);" 2>/dev/null || echo "")

[ -z "$IDS" ] && { log "No unprocessed conversations. Done."; exit 0; }

CONV_COUNT=$(echo "$IDS" | tr ',' '\n' | wc -l | tr -d ' ')

log "Found $CONV_COUNT entries."

# 2. 用临时文件构建对话文本(避免 shell 转义问题)

TMPFILE=$(mktemp)

SQLFILE=$(mktemp)

trap 'rm -f "$TMPFILE" "$SQLFILE"' EXIT

sqlite3 "$DB_PATH" "

SELECT '[' || created_at || '] [' || agent_id || '] [' || role || ']: ' ||

SUBSTR(REPLACE(content, CHAR(10), ' '), 1, 500)

FROM conversations

WHERE id IN ($IDS)

ORDER BY created_at ASC;

" > "$TMPFILE" 2>/dev/null

CONV_TEXT=$(cat "$TMPFILE")

# 3. 调用 Ollama

PROMPT="Analyze these conversations. Output JSON with two fields:

1. \"preferences\": [{\"preference\": \"...\", \"type\": \"explicit|implicit\", \"agent_id\": \"...\"}]

2. \"summaries\": [{\"agent_id\": \"...\", \"summary\": \"...\", \"group_id\": \"YYYY-MM-DD-topic\"}]

Rules: Only extract REAL preferences. Summaries should be 1-2 sentences. Output ONLY valid JSON.

Conversations:

---

${CONV_TEXT}

---"

log "Calling Ollama ($OLLAMA_MODEL)..."

RESPONSE=$(curl -s --max-time 120 "$OLLAMA_URL" \

-d "$(jq -n --arg model "$OLLAMA_MODEL" --arg prompt "$PROMPT" \

'{model: $model, prompt: $prompt, stream: false, options: {temperature: 0.1, num_predict: 4096}}')" 2>/dev/null || echo "")

RESULT=$(echo "$RESPONSE" | jq -r '.response // empty' 2>/dev/null || echo "")

[ -z "$RESULT" ] && { log "ERROR: Empty Ollama response"; exit 1; }

CLEAN_JSON=$(echo "$RESULT" | sed 's/^```json//; s/^```//; s/```$//' | tr -d '\r')

if ! echo "$CLEAN_JSON" | jq empty 2>/dev/null; then

log "WARNING: Invalid JSON from Ollama. Marking as processed anyway."

sqlite3 "$DB_PATH" "UPDATE conversations SET processed = 1 WHERE id IN ($IDS);"

exit 0

fi

# 4. 写入偏好(通过临时 SQL 文件,避免特殊字符问题)

PREF_COUNT=$(echo "$CLEAN_JSON" | jq '.preferences | length' 2>/dev/null || echo "0")

SUM_COUNT=$(echo "$CLEAN_JSON" | jq '.summaries | length' 2>/dev/null || echo "0")

if [ "$PREF_COUNT" -gt 0 ]; then

> "$SQLFILE"

echo "$CLEAN_JSON" | jq -c '.preferences[]' 2>/dev/null | while read -r pref; do

AGENT=$(echo "$pref" | jq -r '.agent_id // "unknown"' | sed "s/'/''/g")

PTEXT=$(echo "$pref" | jq -r '.preference // empty' | sed "s/'/''/g")

PTYPE=$(echo "$pref" | jq -r '.type // "explicit"' | sed "s/'/''/g")

[ -n "$PTEXT" ] && echo "INSERT INTO preferences (agent_id, preference, type) VALUES ('$AGENT', '$PTEXT', '$PTYPE') ON CONFLICT(agent_id, preference) DO UPDATE SET type=excluded.type, updated_at=datetime('now');" >> "$SQLFILE"

done

[ -s "$SQLFILE" ] && sqlite3 "$DB_PATH" < "$SQLFILE" 2>/dev/null || log "WARNING: Some preference inserts failed"

log "Extracted $PREF_COUNT preferences."

else

log "No preferences found."

fi

# 5. 写入摘要

if [ "$SUM_COUNT" -gt 0 ]; then

> "$SQLFILE"

echo "$CLEAN_JSON" | jq -c '.summaries[]' 2>/dev/null | while read -r summ; do

AGENT=$(echo "$summ" | jq -r '.agent_id // "unknown"' | sed "s/'/''/g")

STEXT=$(echo "$summ" | jq -r '.summary // empty' | sed "s/'/''/g")

GID=$(echo "$summ" | jq -r '.group_id // "unknown"' | sed "s/'/''/g")

[ -n "$STEXT" ] && echo "INSERT INTO summaries (conversation_group_id, agent_id, summary) VALUES ('$GID', '$AGENT', '$STEXT');" >> "$SQLFILE"

done

[ -s "$SQLFILE" ] && sqlite3 "$DB_PATH" < "$SQLFILE" 2>/dev/null || log "WARNING: Some summary inserts failed"

log "Generated $SUM_COUNT summaries."

else

log "No summaries generated."

fi

# 6. 标记已处理 + 清理

sqlite3 "$DB_PATH" "UPDATE conversations SET processed = 1 WHERE id IN ($IDS);" 2>/dev/null

log "Marked $CONV_COUNT conversations as processed."

sqlite3 "$DB_PATH" "DELETE FROM conversations WHERE created_at < datetime('now', '-30 days');" 2>/dev/null

sqlite3 "$DB_PATH" "DELETE FROM summaries WHERE created_at < datetime('now', '-90 days');" 2>/dev/null

log "Done. Preferences: $PREF_COUNT, Summaries: $SUM_COUNT, Processed: $CONV_COUNT"

然后在终端中,给脚本添加执行权限:

chmod +x ~/.openclaw/extensions/local-memory-recall/scripts/nightly-process.sh

第六步:配置 OpenClaw

编辑 ~/.openclaw/openclaw.json 配置文件,找到 plugins.entries 部分,加入以下配置项:

"local-memory-recall": {

"enabled": true,

"config": {

"agentIds": [],

"recallEnabled": true,

"addEnabled": true,

"memoryLimit": 6,

"conversationLimit": 5,

"preferenceLimit": 6,

"maxDaysBack": 30,

"captureStrategy": "last_turn"

}

}

说明:agentIds 设为空数组 [] 表示对所有 Agent 生效。如果只想对特定 Agent(例如 muse)生效,则写为 ["muse"]。

第七步:配置夜间定时任务

编辑 ~/.openclaw/cron/jobs.json 文件,在 jobs 数组的最后一个 } 后面加一个逗号,然后粘贴以下任务配置:

{

"id": "nightly-memory-process",

"agentId": "sentinel",

"name": "Nightly Memory Process",

"enabled": true,

"createdAtMs": 1771113600000,

"updatedAtMs": 1771113600000,

"schedule": {

"kind": "cron",

"expr": "27 3 * * *"

},

"sessionTarget": "isolated",

"wakeMode": "now",

"payload": {

"kind": "agentTurn",

"model": "ollama/qwen3:8b",

"thinking": "off",

"message": "Run: bash ~/.openclaw/extensions/local-memory-recall/scripts/nightly-process.sh\nReport results briefly. File output only."

},

"delivery": { "mode": "none" },

"state": {}

}

注意:"expr": "27 3 * * *" 表示每天凌晨 3:27 执行。你可以根据需要修改为非整点时间以避免拥挤。

如果你没有 sentinel 这个 Agent,请将 agentId 修改为你存在的主 Agent ID(例如 "main")。

第八步:重启 Gateway 并验证

在终端中运行以下命令重启 OpenClaw Gateway:

openclaw gateway restart

然后验证插件是否加载成功:

openclaw plugins list

你应该能看到类似这样的输出,表明插件已成功加载:

Local Memory Recall | local-memory-recall | loaded

同时,检查 OpenClaw 的日志,应该能看到插件初始化的信息:

[local-memory-recall] SQLite store initialized

[local-memory-recall] Cleanup completed

第九步:功能验证

现在,给你的任意一个 Agent 发送一条消息。然后,在终端中检查数据库,确认对话已被记录:

sqlite3 ~/.openclaw/extensions/local-memory-recall/data/memories.db "SELECT id, agent_id, role, substr(content, 1, 50), created_at FROM conversations ORDER BY id DESC LIMIT 5;"

如果能看到你刚才的对话记录,说明整个实时记录和召回链路工作正常。

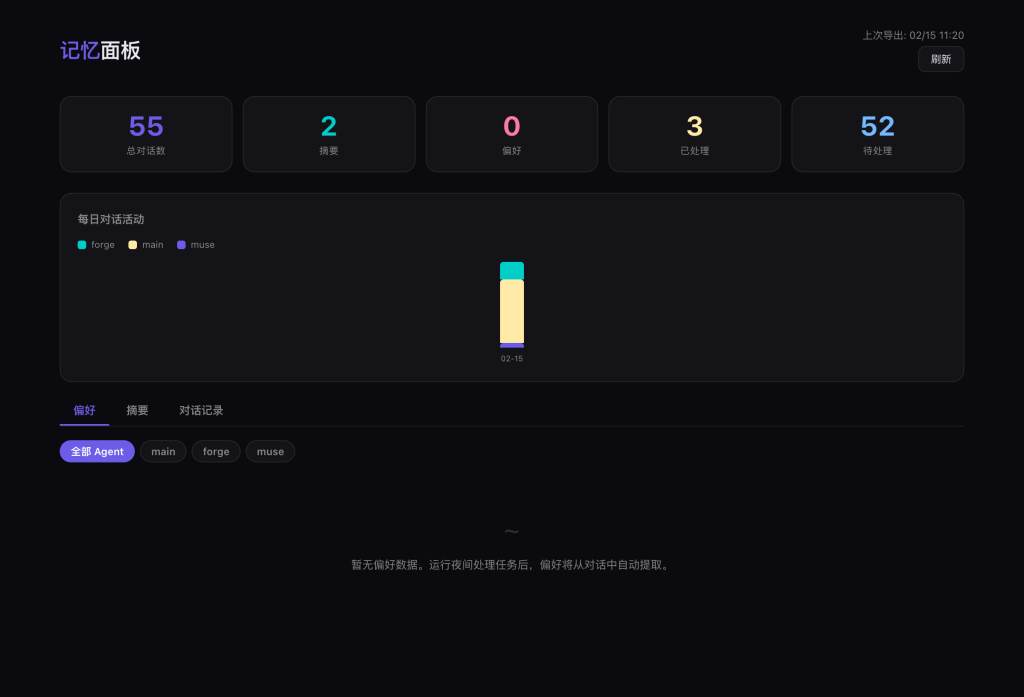

第十步:可视化记忆面板(可选)

如果你想更直观地查看已提取的偏好、摘要和对话记录,可以搭建一个本地可视化面板。

10.1 创建数据导出脚本

在 extensions/local-memory-recall/scripts/ 目录下创建 export-dashboard.sh 文件,并赋予执行权限。该脚本会将数据库中的数据导出为前端面板可读取的 JSON 文件。

#!/usr/bin/env bash

set -euo pipefail

SCRIPT_DIR="$(cd "$(dirname "$0")" && pwd)"

DB_PATH="${SCRIPT_DIR}/../data/memories.db"

OUTPUT_DIR="${1:-$HOME/.openclaw/canvas/memory-data}"

if [ ! -f "$DB_PATH" ]; then

echo "ERROR: Database not found at $DB_PATH"

exit 1

fi

mkdir -p "$OUTPUT_DIR"

# 统计数据

sqlite3 "$DB_PATH" "

SELECT json_object(

'total_conversations', (SELECT COUNT(*) FROM conversations),

'processed_conversations', (SELECT COUNT(*) FROM conversations WHERE processed = 1),

'unprocessed_conversations', (SELECT COUNT(*) FROM conversations WHERE processed = 0),

'total_preferences', (SELECT COUNT(*) FROM preferences),

'total_summaries', (SELECT COUNT(*) FROM summaries),

'agents', (SELECT json_group_array(DISTINCT agent_id) FROM conversations),

'earliest_conversation', (SELECT MIN(created_at) FROM conversations),

'latest_conversation', (SELECT MAX(created_at) FROM conversations),

'exported_at', datetime('now')

);

" > "$OUTPUT_DIR/stats.json"

# 偏好

sqlite3 "$DB_PATH" "

SELECT json_group_array(json_object(

'id', id, 'agent_id', agent_id, 'preference', preference,

'type', type, 'updated_at', updated_at

)) FROM preferences ORDER BY updated_at DESC;

" > "$OUTPUT_DIR/preferences.json"

# 摘要(最近 50 条)

sqlite3 "$DB_PATH" "

SELECT json_group_array(json_object(

'id', id, 'agent_id', agent_id, 'summary', summary, 'created_at', created_at

)) FROM (SELECT * FROM summaries ORDER BY created_at DESC LIMIT 50);

" > "$OUTPUT_DIR/summaries.json"

# 对话(最近 100 条,内容截断 500 字)

sqlite3 "$DB_PATH" "

SELECT json_group_array(json_object(

'id', id, 'session_id', session_id, 'agent_id', agent_id,

'role', role, 'content', SUBSTR(content, 1, 500),

'created_at', created_at, 'processed', processed

)) FROM (SELECT * FROM conversations ORDER BY created_at DESC LIMIT 100);

" > "$OUTPUT_DIR/conversations.json"

# 每日活动

sqlite3 "$DB_PATH" "

SELECT json_group_array(json_object(

'date', date, 'agent_id', agent_id, 'count', cnt

)) FROM (

SELECT DATE(created_at) AS date, agent_id, COUNT(*) AS cnt

FROM conversations GROUP BY date, agent_id ORDER BY date DESC LIMIT 90

);

" > "$OUTPUT_DIR/activity.json"

echo "导出完成!"

设置权限:

chmod +x ~/.openclaw/extensions/local-memory-recall/scripts/export-dashboard.sh

10.2 放置面板 HTML 文件

在 ~/.openclaw/canvas/ 目录下创建一个名为 memory-dashboard.html 的静态 HTML 文件。这个页面会读取上一步导出的 JSON 文件,以图表和列表的形式展示数据。页面包含顶部统计卡片、每日活动柱状图、以及偏好、摘要、对话记录三个标签页。

记忆面板展示总对话数、偏好、摘要统计及每日活动趋势

由于面板文件较长(约700行HTML/CSS/JS),建议从相关仓库复制或使用 Cursor 等工具辅助生成。

10.3 使用方法

- 导出数据:运行导出脚本。

bash ~/.openclaw/extensions/local-memory-recall/scripts/export-dashboard.sh

- 启动本地 HTTP 服务:在 Canvas 目录下启动一个简单的 Python HTTP 服务器。

cd ~/.openclaw/canvas && python3 -m http.server 18794 --bind 0.0.0.0

- 访问面板:

- 本机访问:

http://127.0.0.1:18794/memory-dashboard.html

- 局域网访问:

http://<你的本机IP>:18794/memory-dashboard.html

10.4 自动刷新数据

如果你已经配置了第七步的夜间 Cron Job,可以在该任务的 message 字段中追加一行命令,这样每晚处理完数据后,面板的数据也会自动更新。

bash ~/.openclaw/extensions/local-memory-recall/scripts/export-dashboard.sh

方案对比:MemOS vs 本地方案

| 能力 |

MemOS Cloud(付费) |

本方案(免费) |

| 自动记录对话 |

云端存储 |

本地 SQLite |

| 语义搜索 |

云端 embedding |

复用 OpenClaw QMD |

| 偏好提取 |

实时云端 AI |

夜间 Ollama 批处理 |

| 对话摘要 |

无 |

有(夜间自动生成) |

| Context 预筛选 |

无 |

有(减少重复工具调用) |

| 数据隐私 |

数据在第三方 |

全部本地 |

| 费用 |

收费 |

零 |

| 多 Agent 共享 |

同一账号 |

同一 SQLite 数据库 |

常见问题 (FAQ)

Q: 插件加载失败怎么办?

A: 请检查 Node.js 版本是否 >= 22(node:sqlite 模块需要)。在终端运行 node -v 确认。

Q: 夜间定时任务没有执行?

A: 首先确认 Ollama 服务正在运行(ollama serve),并且已成功拉取所需模型(例如 ollama pull qwen3:8b)。

Q: 如何查看已提取的用户偏好?

A: 可以直接查询数据库:

sqlite3 ~/.openclaw/extensions/local-memory-recall/data/memories.db "SELECT * FROM preferences ORDER BY updated_at DESC;"

Q: 如何手动触发夜间处理任务?

A: 直接运行处理脚本即可:

bash ~/.openclaw/extensions/local-memory-recall/scripts/nightly-process.sh

Q: 如何对所有 Agent 启用此插件?

A: 在第六步的配置中,确保 "agentIds": [] 设置为空数组。

Q: 数据库文件越来越大怎么办?

A: 系统已内置清理机制:conversations 表自动保留30天,summaries 表保留90天,preferences 永久保留。你也可以手动清理,例如只保留最近7天的对话:

sqlite3 ~/.openclaw/extensions/local-memory-recall/data/memories.db "DELETE FROM conversations WHERE created_at < datetime('now', '-7 days');"

这套完整的本地记忆增强方案,无需任何云端开销,就能显著提升 OpenClaw 的对话连贯性和个性化程度。如果你在部署过程中遇到任何问题,或是有更好的改进想法,欢迎在云栈社区的技术论坛与其他开发者交流讨论。