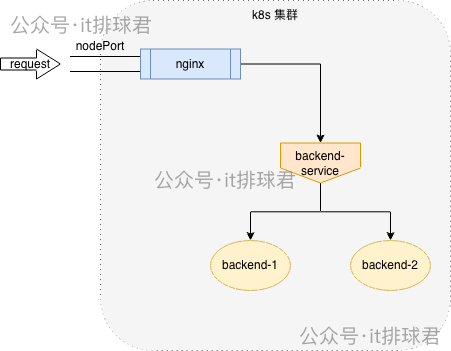

考虑到负载均衡的需求,我们需要一个组件来将流量分发到后端的多个Pod。传统的iptables或ipvs方案在链路追踪方面存在困难。因此,我们选择使用成熟的Envoy代理来替代它们执行负载均衡任务。该组件可以部署在Nginx之后或后端应用之前,本文将采用在Nginx之后部署为Sidecar的模式。

环境准备

测试环境如下,包含后端应用Pod、对应的Service以及一个Nginx测试应用。

▶ kubectl get pod -owide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

backend-6d4cdd4c68-mqzgj 1/1 Running 4 8d 10.244.0.73 wilson <none> <none>

backend-6d4cdd4c68-qjp9m 1/1 Running 4 7d3h 10.244.0.74 wilson <none> <none>

nginx-test-54d79c7bb8-zmrff 1/1 Running 2 23h 10.244.0.75 wilson <none> <none>

▶ kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

backend-service ClusterIP 10.105.148.194 <none> 10000/TCP 8d

nginx-test NodePort 10.110.71.55 <none> 80:30785/TCP 14d

部署Envoy Sidecar

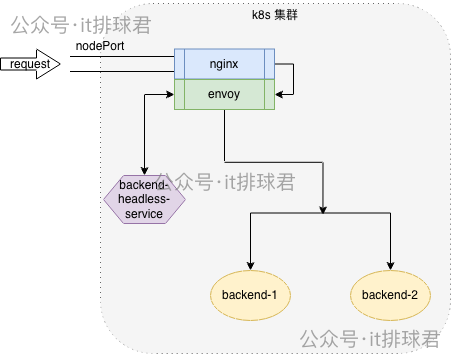

为了实现细粒度的流量控制和观测,我们选择将Envoy以Sidecar形式注入到Nginx Pod中,这是一种常见的云原生/IaaS服务网格模式。

创建Envoy配置ConfigMap

首先,创建一个ConfigMap来定义Envoy的配置文件。该配置定义了一个监听10000端口的监听器,将所有路径为/test的请求转发至名为app_service的集群。

apiVersion: v1

kind: ConfigMap

metadata:

name: envoy-config

data:

envoy.yaml: |

static_resources:

listeners:

- name: ingress_listener

address:

socket_address:

address: 0.0.0.0

port_value: 10000

filter_chains:

- filters:

- name: envoy.filters.network.http_connection_manager

typed_config:

"@type": type.googleapis.com/envoy.extensions.filters.network.http_connection_manager.v3.HttpConnectionManager

stat_prefix: ingress_http

http_protocol_options:

accept_http_10: true

common_http_protocol_options:

idle_timeout: 300s

codec_type: AUTO

route_config:

name: local_route

virtual_hosts:

- name: app

domains: ["*"]

routes:

- match: { prefix: "/test" }

route:

cluster: app_service

http_filters:

- name: envoy.filters.http.router

typed_config:

"@type": type.googleapis.com/envoy.extensions.filters.http.router.v3.Router

access_log:

- name: envoy.access_loggers.stdout

typed_config:

"@type": type.googleapis.com/envoy.extensions.access_loggers.stream.v3.StdoutAccessLog

log_format:

text_format: "[%START_TIME%] \"%REQ(:METHOD)% %REQ(X-ENVOY-ORIGINAL-PATH?:PATH)% %PROTOCOL%\" %RESPONSE_CODE% %BYTES_SENT% %DURATION% %REQ(X-REQUEST-ID)% \"%REQ(USER-AGENT)%\" \"%REQ(X-FORWARDED-FOR)%\" %UPSTREAM_HOST% %UPSTREAM_CLUSTER% %RESPONSE_FLAGS%\n"

clusters:

- name: app_service

connect_timeout: 1s

type: STRICT_DNS

lb_policy: ROUND_ROBIN

load_assignment:

cluster_name: app_service

endpoints:

- lb_endpoints:

- endpoint:

address:

socket_address:

address: "backend-service"

port_value: 10000

admin:

access_log_path: "/tmp/access.log"

address:

socket_address:

address: 0.0.0.0

port_value: 9901

向Nginx Deployment注入Sidecar容器

接下来,通过kubectl patch命令修改nginx-test部署,添加Envoy容器并挂载上述配置。

kubectl patch deployment nginx-test --type='json' -p='[

{

"op": "add",

"path": "/spec/template/spec/volumes/-",

"value": {

"configMap": {

"defaultMode": 420,

"name": "envoy-config"

},

"name": "envoy-config"

}

},

{

"op": "add",

"path": "/spec/template/spec/containers/-",

"value": {

"args": [

"-c",

"/etc/envoy/envoy.yaml"

],

"image": "registry.cn-beijing.aliyuncs.com/wilsonchai/envoy:v1.32-latest",

"imagePullPolicy": "IfNotPresent",

"name": "envoy",

"ports": [

{

"containerPort": 10000,

"protocol": "TCP"

},

{

"containerPort": 9901,

"protocol": "TCP"

}

],

"volumeMounts": [

{

"mountPath": "/etc/envoy",

"name": "envoy-config"

}

]

}

}

]'

执行后,新的Pod将包含两个容器:

▶ kubectl get pod -owide -l app=nginx-test

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-test-6df974c9f9-qksd4 2/2 Running 0 1d 10.244.0.80 wilson <none> <none>

此时,Envoy Sidecar在Pod内监听10000端口,并将匹配/test的请求转发至backend-service:10000。

修改Nginx配置指向Envoy

最后,需要修改Nginx配置,使其将代理到后端的上游(upstream)指向本Pod内的Envoy实例(127.0.0.1:10000)。

upstream backend_ups {

# server backend-service:10000;

server 127.0.0.1:10000;

}

server {

listen 80;

listen [::]:80;

server_name localhost;

location /test {

proxy_pass http://backend_ups;

}

}

修改并重启Nginx后,流量路径变为:Client -> Nginx -> Envoy Sidecar -> Backend Service。

初步验证与问题发现

通过NodePort访问Nginx服务,并查看Envoy容器的日志。

curl 10.22.12.178:30785/test

kubectl logs -f -l app=nginx-test -c envoy

然而,日志显示UPSTREAM_HOST仍是Service的ClusterIP:

[2025-12-16T09:45:56.365Z] "GET /test HTTP/1.0" 200 40 0 99032619-a060-481d-8f0d-9d773fad9b12 "curl/7.81.0" "-" 10.105.148.194:10000 app_service -

这是因为当前Envoy配置的上游集群地址是Kubernetes Service(backend-service)。Envoy只是将负载均衡的职责从kube-proxy的iptables/ipvs转移到了自己身上,但流量依然经过Service这一层,由Kubernetes的运维/DevOps内置负载均衡机制进行最后的Pod选择,因此Envoy无法感知到真实的后端Pod IP。

解决方案:使用Headless Service

要解决此问题,必须让Envoy直接对Pod进行负载均衡。Kubernetes的Headless Service(无头服务)正是为此场景设计:它不分配ClusterIP,DNS查询会直接返回后端Pod的IP地址列表。

-

创建Headless Service

apiVersion: v1

kind: Service

metadata:

name: backend-headless-service

spec:

clusterIP: None # 这是创建Headless Service的关键

selector:

app: backend

ports:

- name: http

port: 10000

targetPort: 10000

-

修改Envoy配置指向Headless Service

更新ConfigMap中clusters部分,将地址改为Headless Service。

...

clusters:

- name: app_service

connect_timeout: 1s

type: STRICT_DNS

lb_policy: ROUND_ROBIN

load_assignment:

cluster_name: app_service

endpoints:

- lb_endpoints:

- endpoint:

address:

socket_address:

address: "backend-headless-service" # 改为Headless Service名称

port_value: 10000

...

更新配置并重启Envoy Sidecar后,再次访问并查看日志:

[2025-12-16T10:05:56.365Z] "GET /test HTTP/1.0" 200 40 0 2b029187-cddb-4278-99b8-2953a7e841a0 "curl/7.81.0" "-" 10.244.0.81:10000 app_service -

[2025-12-16T10:05:57.453Z] "GET /test HTTP/1.0" 200 40 1 384f9394-7ff9-4abb-b0f8-f9b69f2ba992 "curl/7.81.0" "-" 10.244.0.82:10000 app_service -

现在,UPSTREAM_HOST字段成功显示了真实的后端Pod IP(10.244.0.81、10.244.0.82),实现了链路追踪的初始目标。

架构总结与优化

当前的架构如下图所示:

此方案为每个Nginx Pod配备了一个Envoy Sidecar。若需节约资源,可以将Envoy部署为DaemonSet,每个节点运行一个Envoy实例,并将该节点上所有Pod的出口流量指向本地的Envoy。这种模式在服务网格中较为常见,是云原生/IaaS架构下的一种高效部署方式。