此篇文章记录:k8s 部署 Elasticsearch、Kibana,Java 1.8 应用日志通过 APM Agent 实现链路追踪收集,Filebeat 将结构化业务日志过滤推送到 ES。

APM 负责链路追踪和性能指标,Filebeat 负责完整业务日志——二者分工明确,协同构建可观测性闭环。整个方案基于 Elastic APM Server 7.17.15 + Filebeat 7.17.15 + Spring Boot 2.1.6.RELEASE(JDK 1.8),适用于生产环境轻量级可观测能力建设。

源代码:

https://gitee.com/jsonhc/springboot-manager.git

1、通过 K8s 部署 Elasticsearch

由于此次部署未配置持久化存储类(StorageClass),所有 ELK 组件均采用 hostPath 模式运行,数据不落盘,仅用于验证链路与日志通路。

kubectl create namespace elk

在 k8smaster 节点创建本地挂载目录并赋权:

[root@k8smaster elk]# mkdir /opt/elasticsearch-data

[root@k8smaster elk]# chmod 777 /opt/elasticsearch-data

01-elasticsearch.yaml 内容如下(单节点部署,禁用安全认证):

# 01-elasticsearch.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: elasticsearch

namespace: elk

spec:

replicas: 1

selector:

matchLabels:

app: elasticsearch

template:

metadata:

labels:

app: elasticsearch

spec:

nodeName: k8smaster # 单节点指定

containers:

- name: elasticsearch

image: docker.elastic.co/elasticsearch/elasticsearch:7.17.15

ports:

- containerPort: 9200

env:

- name: ES_JAVA_OPTS

value: "-Xms1g -Xmx1g"

- name: discovery.type

value: single-node

- name: xpack.security.enabled

value: "false"

- name: bootstrap.memory_lock

value: "false"

volumeMounts:

- name: data

mountPath: /usr/share/elasticsearch/data

resources:

limits:

memory: "2Gi"

cpu: "1000m"

volumes:

- name: data

hostPath:

path: /opt/elasticsearch-data

type: DirectoryOrCreate

---

apiVersion: v1

kind: Service

metadata:

name: elasticsearch

namespace: elk

spec:

selector:

app: elasticsearch

ports:

- port: 9200

部署并验证:

[root@k8smaster elk]# kubectl create -f 01-elasticsearch.yaml

deployment.apps/elasticsearch created

service/elasticsearch created

[root@k8smaster elk]# kubectl -n elk get pod

NAME READY STATUS RESTARTS AGE

elasticsearch-799dc746c-w57nr 1/1 Running 0 11s

进入 Pod 检查集群健康状态:

[root@k8smaster elk]# kubectl -n elk exec -it elasticsearch-799dc746c-w57nr -- sh

sh-5.0# curl -s http://localhost:9200/_cluster/health

{"cluster_name":"docker-cluster","status":"green","timed_out":false,"number_of_nodes":1,"number_of_data_nodes":1,"active_primary_shards":1,"active_shards":1,"relocating_shards":0,"initializing_shards":0,"unassigned_shards":0,"delayed_unassigned_shards":0,"number_of_pending_tasks":0,"number_of_in_flight_fetch":0,"task_max_waiting_in_queue_millis":0,"active_shards_percent_as_number":100.0}

✅ 状态为 green,说明单节点集群已就绪。

2、通过 K8s 部署 APM Server

APM Server 是 Elastic APM 架构的核心中转组件,接收 Java Agent 上报的链路数据,并写入 Elasticsearch。

02-apm-server.yaml 包含 ConfigMap + Deployment + Service:

# 02-apm-server.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: apm-server-config

namespace: elk

data:

apm-server.yml: |

apm-server:

host: "0.0.0.0:8200"

# 允许所有 Agent 连接(生产环境建议加 Token)

auth:

secret_token: ""

api_key:

enabled: false

# RUM (Real User Monitoring) 配置

rum:

enabled: true

allow_origins: ['*']

allow_headers: ["x-requested-with", "content-type"]

output:

elasticsearch:

hosts: ["http://elasticsearch:9200"]

enabled: true

# 日志配置

logging:

level: info

to_files: false

to_stderr: true

# 监控配置

monitoring:

enabled: false

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: apm-server

namespace: elk

spec:

replicas: 1

selector:

matchLabels:

app: apm-server

template:

metadata:

labels:

app: apm-server

spec:

containers:

- name: apm-server

image: docker.elastic.co/apm/apm-server:7.17.15

ports:

- containerPort: 8200

env:

- name: ELASTICSEARCH_HOST

value: "elasticsearch:9200"

volumeMounts:

- name: config

mountPath: /usr/share/apm-server/apm-server.yml

subPath: apm-server.yml

resources:

requests:

memory: "512Mi"

cpu: "250m"

limits:

memory: "1Gi"

cpu: "500m"

livenessProbe:

httpGet:

path: /

port: 8200

initialDelaySeconds: 30

readinessProbe:

httpGet:

path: /

port: 8200

initialDelaySeconds: 10

volumes:

- name: config

configMap:

name: apm-server-config

---

apiVersion: v1

kind: Service

metadata:

name: apm-server

namespace: elk

spec:

type: ClusterIP

selector:

app: apm-server

ports:

- port: 8200

targetPort: 8200

应用配置:

[root@k8smaster elk]# kubectl apply -f 02-apm-server.yaml

configmap/apm-server-config configured

deployment.apps/apm-server configured

service/apm-server configured

确认 Pod 运行:

[root@k8smaster elk]# kubectl -n elk get pod

NAME READY STATUS RESTARTS AGE

apm-server-686d65d7d7-v5l2k 1/1 Running 0 7m22s

elasticsearch-799dc746c-w57nr 1/1 Running 0 35m

查看 APM Server 启动日志(关键字段已高亮):

[root@k8smaster elk]# kubectl -n elk logs -f apm-server-686d65d7d7-v5l2k

{"log.level":"info","@timestamp":"2026-03-14T07:56:11.507Z","log.origin":{"file.name":"instance/beat.go","file.line":698},"message":"Home path: [/usr/share/apm-server] Config path: [/usr/share/apm-server] Data path: [/usr/share/apm-server/data] Logs path: [/usr/share/apm-server/logs] Hostfs Path: [/]","service.name":"apm-server","ecs.version":"1.6.0"}

{"log.level":"info","@timestamp":"2026-03-14T07:56:11.510Z","log.origin":{"file.name":"instance/beat.go","file.line":706},"message":"Beat ID: f7c40709-1fcf-4c5d-a970-1d07aa53306d","service.name":"apm-server","ecs.version":"1.6.0"}

{"log.level":"info","@timestamp":"2026-03-14T07:56:11.513Z","log.logger":"seccomp","log.origin":{"file.name":"seccomp/seccomp.go","file.line":124},"message":"Syscall filter successfully installed","service.name":"apm-server","ecs.version":"1.6.0"}

...

💡 若需启用远程配置(如动态采样率),需在 apm-server.yml 中补全 apm-server.kibana 和 apm-server.rum 配置段;当前示例为最小可行集。

3、通过 K8s 部署 Kibana

Kibana 提供 APM UI 可视化界面及日志 Discover 功能,是观测能力的统一入口。

03-kibana.yaml 内容如下(中文界面 + APM UI 启用):

# 03-kibana.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: kibana

namespace: elk

spec:

replicas: 1

selector:

matchLabels:

app: kibana

template:

metadata:

labels:

app: kibana

spec:

containers:

- name: kibana

image: docker.elastic.co/kibana/kibana:7.17.15

ports:

- containerPort: 5601

env:

- name: ELASTICSEARCH_HOSTS

value: '["http://elasticsearch:9200"]'

- name: I18N_LOCALE

value: "zh-CN"

- name: XPACK_SECURITY_ENABLED

value: "false"

- name: XPACK_APM_UI_ENABLED

value: "true" # 启用 APM UI

resources:

requests:

memory: "512Mi"

cpu: "250m"

limits:

memory: "1Gi"

cpu: "500m"

---

apiVersion: v1

kind: Service

metadata:

name: kibana

namespace: elk

spec:

type: NodePort

selector:

app: kibana

ports:

- port: 5601

targetPort: 5601

nodePort: 30001

部署后访问:http://192.168.213.201:30001/

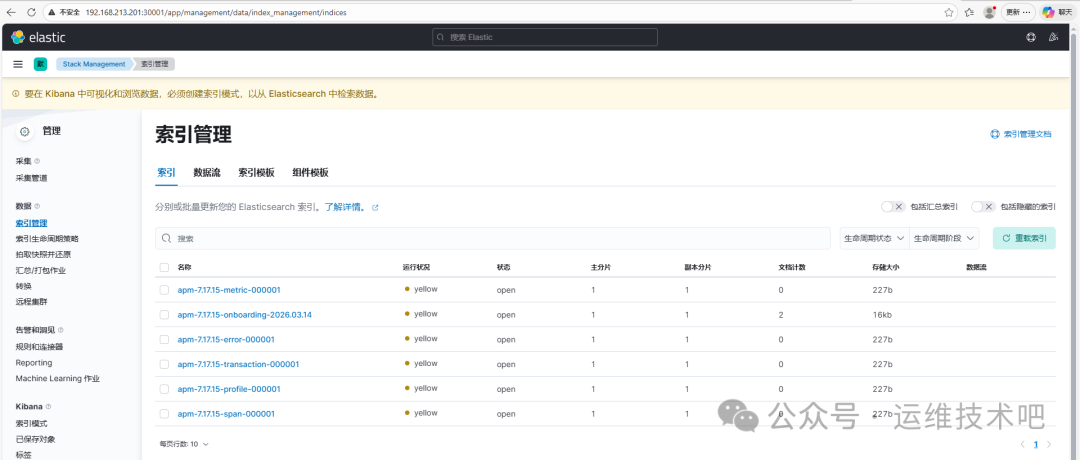

✅ 图中可见 apm-7.17.15-* 系列索引已自动创建,说明 APM Server 已成功连接 ES 并准备接收数据。

4、将 SpringBoot 应用集成 APM Agent

本项目使用 springboot-manager 示例工程,基于 JDK 1.8,依赖 MySQL 初始化脚本(文中略)。

修改 Dockerfile 注入 APM Agent

# 基础镜像

FROM registry.cn-hangzhou.aliyuncs.com/jsonhc/maven:3.5.0-jdk-8-alpine

WORKDIR /app

COPY . /app

COPY settings.xml /usr/share/maven/conf/settings.xml

RUN mvn -s settings.xml -f pom.xml -U clean package -Dmaven.test.skip=true

FROM registry.cn-hangzhou.aliyuncs.com/wb_public/openjdk:8-jre

WORKDIR /app

COPY --from=0 /app/target/*.jar /app/manager.jar

ADD https://repo1.maven.org/maven2/co/elastic/apm/elastic-apm-agent/1.43.0/elastic-apm-agent-1.43.0.jar /app/elastic-apm-agent.jar

# 时间

RUN ln -sf /usr/share/zoneinfo/Asia/Shanghai /etc/localtime

RUN echo 'Asia/Shanghai' >/etc/timezone

# 启动服务

ENTRYPOINT ["java","-javaagent:/app/elastic-apm-agent.jar","-jar","/app/manager.jar"]

# 暴露端口

EXPOSE 8080

构建并推送镜像:

[root@k8sworker springboot-manager]# docker build -t registry.cn-hangzhou.aliyuncs.com/jsonhc/springboot:1.3 .

[root@k8sworker springboot-manager]# docker push registry.cn-hangzhou.aliyuncs.com/jsonhc/springboot:1.3

K8s 部署 YAML(含 APM 环境变量)

k8s-deployment.yaml:

# k8s-deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: springboot-manager

namespace: default

spec:

replicas: 1

selector:

matchLabels:

app: springboot-manager

template:

metadata:

labels:

app: springboot-manager

spec:

containers:

- name: app

image: registry.cn-hangzhou.aliyuncs.com/jsonhc/springboot:1.3

ports:

- containerPort: 8080

env:

- name: SPRING_PROFILES_ACTIVE

value: "prod"

# 传递应用名称

- name: SPRING_APPLICATION_NAME

value: "springboot-manager"

# APM Agent 配置(已注入)

- name: ELASTIC_APM_SERVER_URL

value: "http://apm-server.elk.svc.cluster.local:8200"

- name: ELASTIC_APM_SERVICE_NAME

value: "springboot-manager"

- name: ELASTIC_APM_LOG_SENDING

value: "true"

resources:

requests:

memory: "512Mi"

cpu: "250m"

limits:

memory: "1Gi"

cpu: "500m"

---

apiVersion: v1

kind: Service

metadata:

name: springboot-manager

namespace: default

spec:

type: NodePort

selector:

app: springboot-manager

ports:

- name: http

port: 8080

targetPort: 8080

nodePort: 30080

部署并验证:

[root@k8smaster elk]# kubectl create -f k8s-deployment.yaml

deployment.apps/springboot-manager created

service/springboot-manager created

[root@k8smaster elk]# kubectl get pod

NAME READY STATUS RESTARTS AGE

springboot-manager-d4bb48db9-dc5jm 1/1 Running 0 3s

观察 APM Server 日志,可看到 Java Agent 成功注册:

{

"log.level":"info",

"@timestamp":"2026-03-14T08:43:36.192Z",

"log.logger":"request",

"message":"request ok",

"user_agent.original":"apm-agent-java/1.43.0 (springboot-manager 0.0.1-SNAPSHOT)",

"http.response.status_code":200,

"ecs.version":"1.6.0"

}

{

"log.level":"info",

"@timestamp":"2026-03-14T08:43:46.791Z",

"log.logger":"request",

"message":"request accepted",

"url.original":"/intake/v2/events",

"http.request.method":"POST",

"user_agent.original":"apm-agent-java/1.43.0 (springboot-manager 0.0.1-SNAPSHOT)",

"http.response.status_code":202,

"ecs.version":"1.6.0"

}

✅ 202 Accepted 表明链路数据已成功接入 APM Server。

⚠️ 注意:日志中出现 forbidden request: Agent remote configuration is disabled 是因未启用 Kibana 远程配置模块,不影响链路上报,可忽略。

5、K8s 部署 Filebeat(Sidecar 模式)

为避免日志文件权限与路径耦合问题,本文采用 Sidecar 模式:Filebeat 与 SpringBoot 容器共享 emptyDir 日志卷,实时采集 JSON 格式日志。

步骤一:配置 SpringBoot 输出 JSON 日志

修改 src/main/resources/logback-spring.xml,启用 k8s,prod Profile,输出至 /var/log/app/app.log,并注入 traceId 与 service_name 字段:

<?xml version="1.0" encoding="UTF-8"?>

<configuration>

<include resource="org/springframework/boot/logging/logback/defaults.xml"/>

<property name="LOG_LEVEL" value="INFO"/>

<!-- 开发环境:相对路径 -->

<property name="LOG_PATH" value="log"/>

<property name="LOG_FILE" value="project_manager.log"/>

<property name="LOG_HISTORY" value="project_manager.%d{yyyy-MM-dd}.log"/>

<!-- 控制台输出(所有环境) -->

<appender name="CONSOLE" class="ch.qos.logback.core.ConsoleAppender">

<encoder>

<pattern>${CONSOLE_LOG_PATTERN}</pattern>

</encoder>

</appender>

<!-- ==================== JSON 文件输出(仅 k8s,prod 环境) ==================== -->

<springProfile name="k8s,prod">

<!-- 使用不同的路径变量,避免冲突 -->

<property name="K8S_LOG_PATH" value="/var/log/app"/>

<property name="K8S_LOG_FILE" value="app.log"/>

<property name="K8S_LOG_HISTORY" value="app.%d{yyyy-MM-dd}.log"/>

<appender name="JSON_FILE" class="ch.qos.logback.core.rolling.RollingFileAppender">

<file>${K8S_LOG_PATH}/${K8S_LOG_FILE}</file>

<encoder class="net.logstash.logback.encoder.LogstashEncoder">

<includeMdcKeyName>traceId</includeMdcKeyName>

<customFields>{"service_name":"${SPRING_APPLICATION_NAME:-springboot-manager}"}</customFields>

</encoder>

<rollingPolicy class="ch.qos.logback.core.rolling.TimeBasedRollingPolicy">

<fileNamePattern>${K8S_LOG_PATH}/${K8S_LOG_HISTORY}</fileNamePattern>

<maxHistory>7</maxHistory>

</rollingPolicy>

</appender>

<appender name="ASYNC_JSON_FILE" class="ch.qos.logback.classic.AsyncAppender">

<queueSize>512</queueSize>

<appender-ref ref="JSON_FILE"/>

</appender>

</springProfile>

<!-- ==================== 环境配置 ==================== -->

<!-- K8s/生产环境:控制台 + JSON 文件 -->

<springProfile name="k8s,prod">

<root level="${LOG_LEVEL}">

<appender-ref ref="CONSOLE"/>

<appender-ref ref="ASYNC_JSON_FILE"/>

</root>

</springProfile>

</configuration>

添加 Maven 依赖(pom.xml):

<!-- pom.xml -->

<dependency>

<groupId>net.logstash.logback</groupId>

<artifactId>logstash-logback-encoder</artifactId>

<version>6.6</version>

</dependency>

重新构建镜像:

[root@k8sworker springboot-manager]# docker build -t registry.cn-hangzhou.aliyuncs.com/jsonhc/springboot:1.7 .

[root@k8sworker springboot-manager]# docker push registry.cn-hangzhou.aliyuncs.com/jsonhc/springboot:1.7

步骤二:部署 Sidecar YAML

filebeat-springboot.yaml:

# springboot-manager-with-filebeat.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: springboot-manager

namespace: default

spec:

replicas: 1

selector:

matchLabels:

app: springboot-manager

template:

metadata:

labels:

app: springboot-manager

spec:

containers:

# 主应用容器

- name: app

image: registry.cn-hangzhou.aliyuncs.com/jsonhc/springboot:1.7

ports:

- containerPort: 8080

env:

- name: SPRING_PROFILES_ACTIVE

value: "prod"

- name: SPRING_APPLICATION_NAME

value: "springboot-manager"

- name: ELASTIC_APM_SERVER_URL

value: "http://apm-server.elk.svc.cluster.local:8200"

- name: ELASTIC_APM_SERVICE_NAME

value: "springboot-manager"

- name: ELASTIC_APM_LOG_SENDING

value: "true"

volumeMounts:

- name: logs

mountPath: /var/log/app

resources:

limits:

memory: "1Gi"

cpu: "500m"

# Filebeat Sidecar 容器

- name: filebeat

image: docker.elastic.co/beats/filebeat:7.17.15

args:

- -c

- /etc/filebeat/filebeat.yml

- -e

env:

- name: NODE_NAME

valueFrom:

fieldRef:

fieldPath: spec.nodeName

volumeMounts:

- name: logs

mountPath: /var/log/app

readOnly: true

- name: filebeat-config

mountPath: /etc/filebeat/filebeat.yml

subPath: filebeat.yml

resources:

limits:

memory: 128Mi

cpu: 100m

volumes:

- name: logs

emptyDir: {} # 共享日志目录

- name: filebeat-config

configMap:

name: filebeat-sidecar-config

---

apiVersion: v1

kind: ConfigMap

metadata:

name: filebeat-sidecar-config

namespace: default

data:

filebeat.yml: |

filebeat.inputs:

- type: log

enabled: true

paths:

- /var/log/app/*.log

multiline:

pattern: '^\d{4}-\d{2}-\d{2}'

negate: true

match: after

timeout: 5s

fields:

app_name: springboot-manager

pod_name: ${NODE_NAME}

fields_under_root: true

output:

elasticsearch:

hosts: ["http://elasticsearch.elk.svc.cluster.local:9200"]

index: "springboot-logs-%{+yyyy.MM.dd}"

setup.template:

name: "springboot-logs"

pattern: "springboot-logs-*"

enabled: true

setup.ilm:

enabled: false

logging:

level: info

to_stderr: true

---

apiVersion: v1

kind: Service

metadata:

name: springboot-manager

namespace: default

spec:

type: NodePort

selector:

app: springboot-manager

ports:

- port: 8080

targetPort: 8080

nodePort: 30080

部署后查看主容器日志(确认 APM Agent 加载成功):

[root@k8smaster elk]# kubectl logs -f springboot-manager-57989586bd-vdr5t -c app

2026-03-14 17:47:41,220 [main] INFO co.elastic.apm.agent.configuration.StartupInfo - Starting Elastic APM 1.43.0 as springboot-manager (0.0.1-SNAPSHOT) on Java 1.8.0_342 ...

2026-03-14 17:48:06,093 [main] INFO co.elastic.apm.agent.impl.ElasticApmTracer - Tracer switched to RUNNING state

2026-03-14 17:48:07,592 [elastic-apm-server-healthcheck] INFO co.elastic.apm.agent.report.ApmServerHealthChecker - Elastic APM server is available: { "build_date": "2023-11-10T18:50:41Z", "version": "7.17.15" }

...

2026-03-14 17:49:59.988 [main] INFO c.c.project.CompanyProjectApplication :

----------------------------------------------------------

Application 'springboot-manager' is running!

Access URLs:

Login: http://10.244.162.219:8080/manager

Doc: http://10.244.162.219:8080/manager/doc.html

----------------------------------------------------------

再查看 Filebeat 容器日志:

[root@k8smaster elk]# kubectl logs -f springboot-manager-57989586bd-2n4pd -c filebeat

2026-03-14T10:00:26.589Z INFO [crawler] beater/crawler.go:71 Loading Inputs: 1

2026-03-14T10:00:26.686Z INFO [input] log/input.go:171 Configured paths: [/var/log/app/*.log] {"input_id": "057db446-6dde-4359-a01e-17559dd5b444"}

2026-03-14T10:01:16.690Z INFO [input.harvester] log/harvester.go:310 Harvester started for paths: [/var/log/app/*.log] {"input_id": "057db446-6dde-4359-a01e-17559dd5b444", "source": "/var/log/app/app.log", ...}

2026-03-14T10:01:29.742Z INFO template/load.go:110 Template "springboot-logs" already exists and will not be overwritten.

2026-03-14T10:01:29.743Z INFO [publisher_pipeline_output] pipeline/output.go:151 Connection to backoff(elasticsearch(http://elasticsearch.elk.svc.cluster.local:9200)) established

2026-03-14T10:01:56.593Z INFO [monitoring] log/log.go:184 Non-zero metrics in the last 30s {"monitoring": {"metrics": {"filebeat": {"events": {"added": 2, "done": 2}, "harvester": {"open_files": 1, "running": 1}}, ...}}}

⚠️ 日志中偶现 error fetching io.pressure 是因宿主机使用 cgroup v2,而 Filebeat 7.17 默认适配 v1 —— 属于兼容性提示,不影响日志采集与投递,可安全忽略。

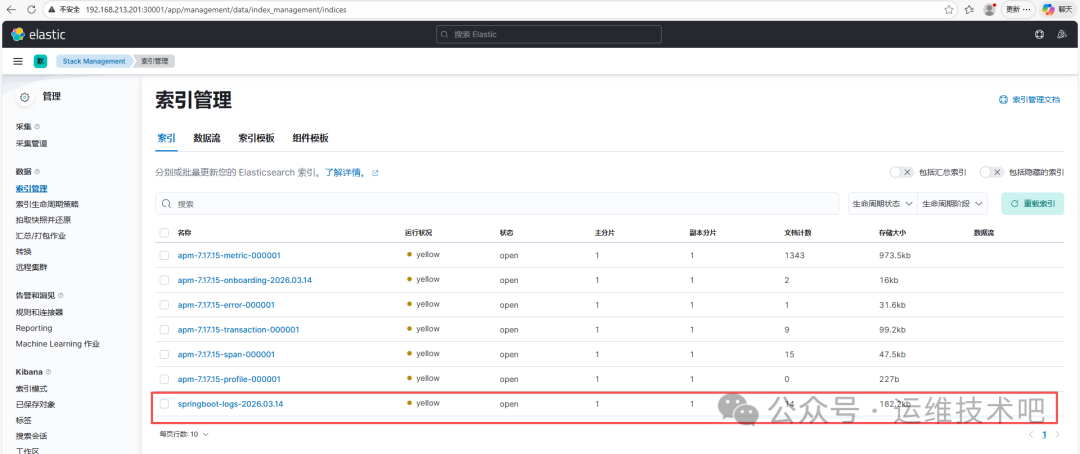

查看 ES 索引与日志效果

进入 Kibana → Stack Management → Index Management,可见新索引:

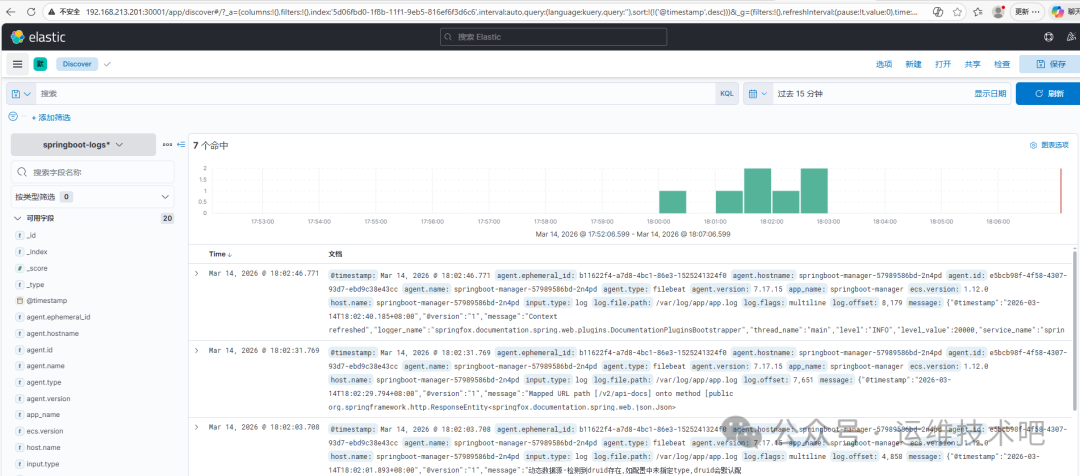

创建索引模式 springboot-logs-* 后,进入 Discover 页面即可查看结构化日志:

每条日志已自动携带:

agent.name: springboot-manager-57989588d-2ndpdhost.name: 同上(Pod 名)message: JSON 格式原始日志(含 traceId、level、thread_name 等)input.type: log

至此,SpringBoot 应用在 Kubernetes 环境下,已实现:

- ✅ APM Agent 自动注入与链路数据上报(

apm-* 索引)

- ✅ Filebeat Sidecar 实时采集 JSON 日志(

springboot-logs-* 索引)

- ✅ Kibana 统一可视化分析(APM UI + Discover)

该架构已在云栈社区的 Java 与 运维/DevOps/SRE 板块被多次验证,适用于中小团队快速落地可观测性基建。

🌐 同理,Python、Node.js、Go 等语言应用只需替换对应语言的 Elastic APM Agent(如 elastic-apm-python),即可复用同一套 ELK 后端与 Kibana 配置。